Towards a naive Moving Object Processing System (and a lot of linking enhancement)

Here I will show the process I use to detect and link moving objects in a series of TESS images and then generate MPC formatted observations for those objects. Pan-STARRS and the Rubin Observatory (LSST) refer to automated systems that detect, link and generate moving object observation coordinates as Moving Object Processing Systems or MOPS. What I've built is in no way as automatic, robust or sophisticated, thus I refer to it as naive MOPS. My nMOPS is basically an interactive script that I run against a set of images with a few tweakable, user-defined parameters which outputs the observation coordinates for the objects it detects and links within the difference images it calculates. The terms that I'm using are defined in the process description definitions section.

Difference images and linked detections

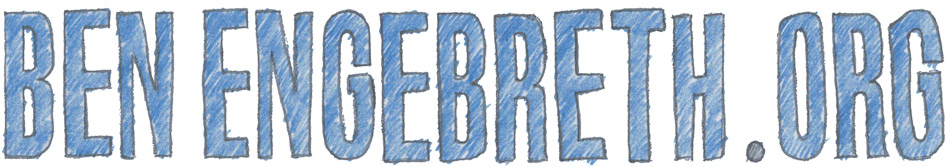

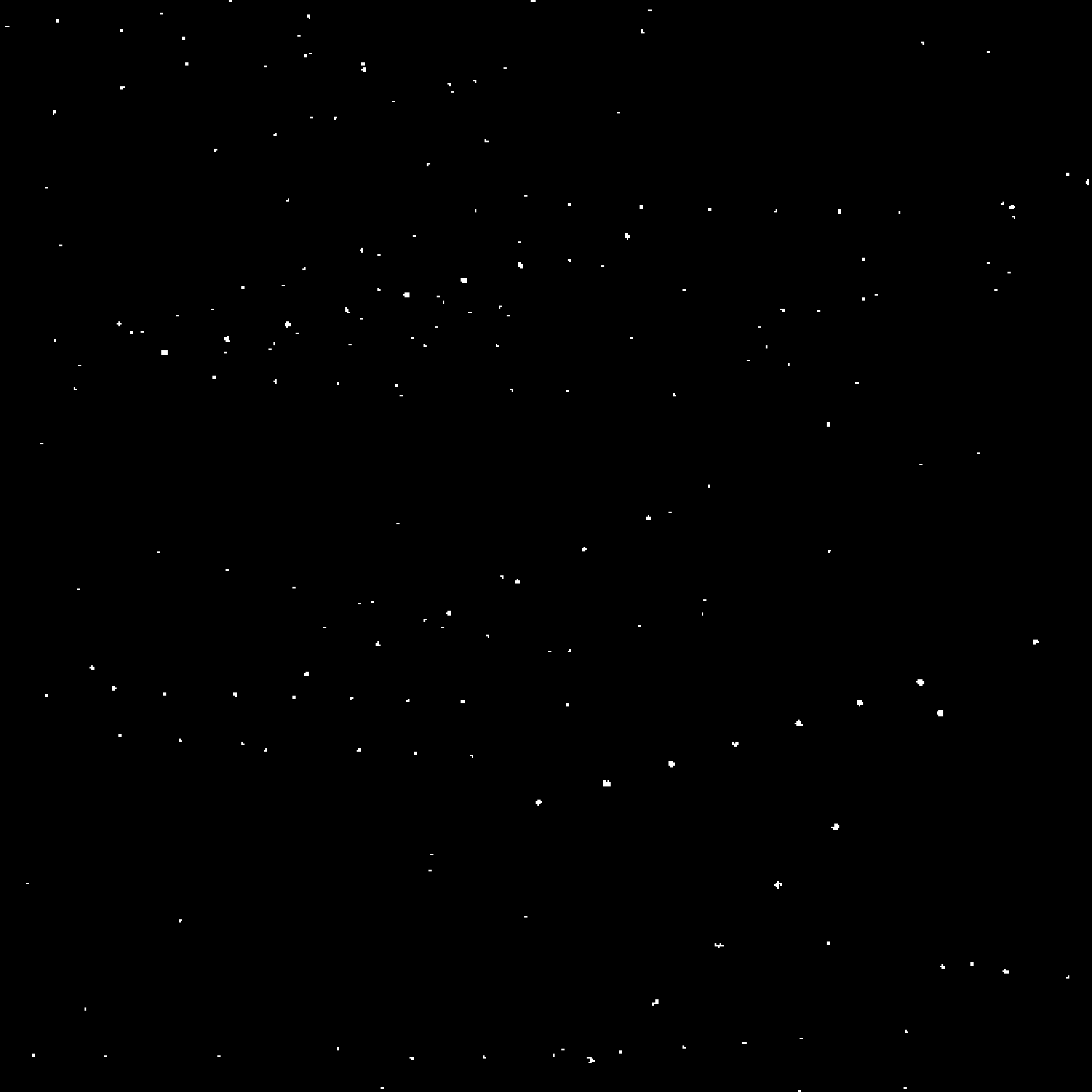

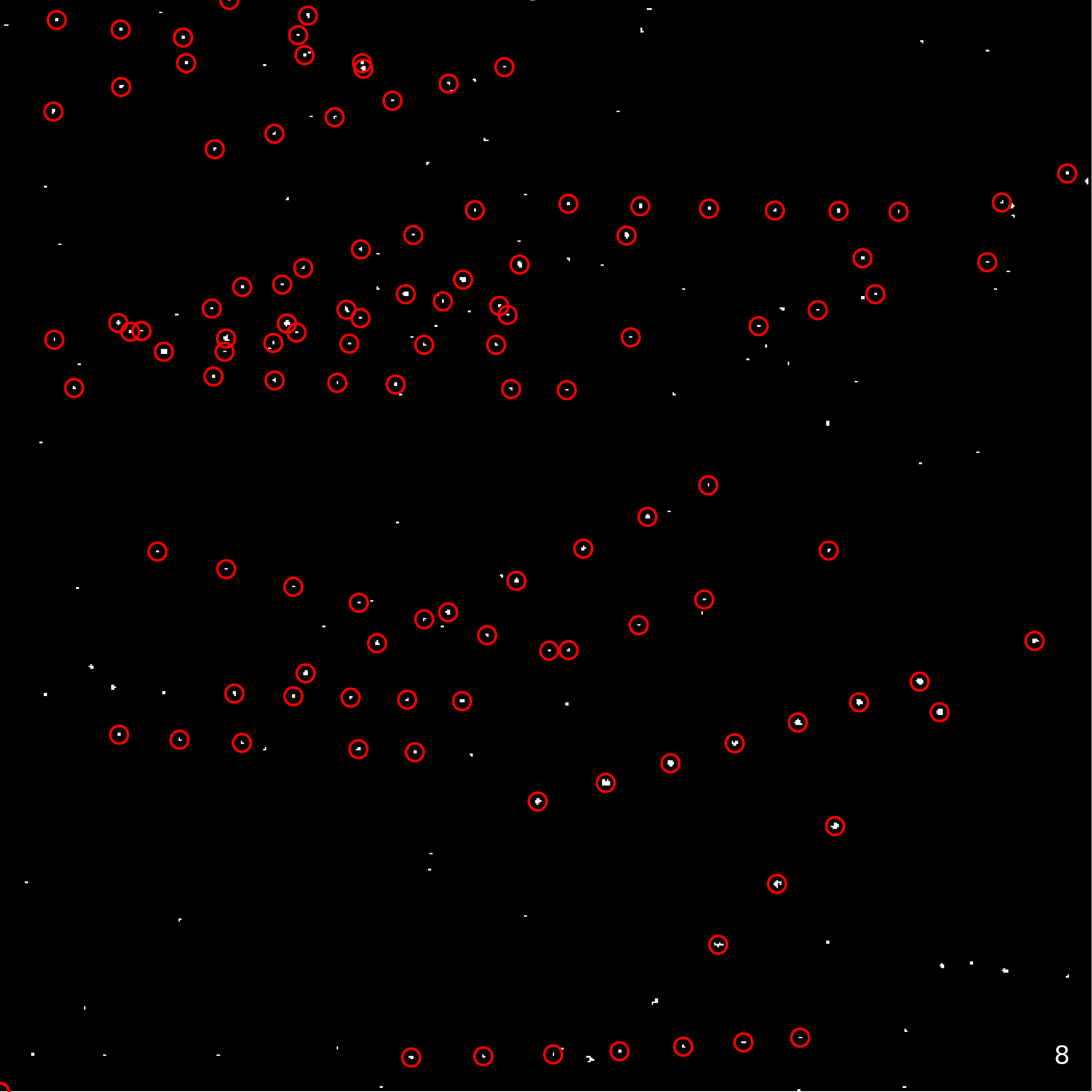

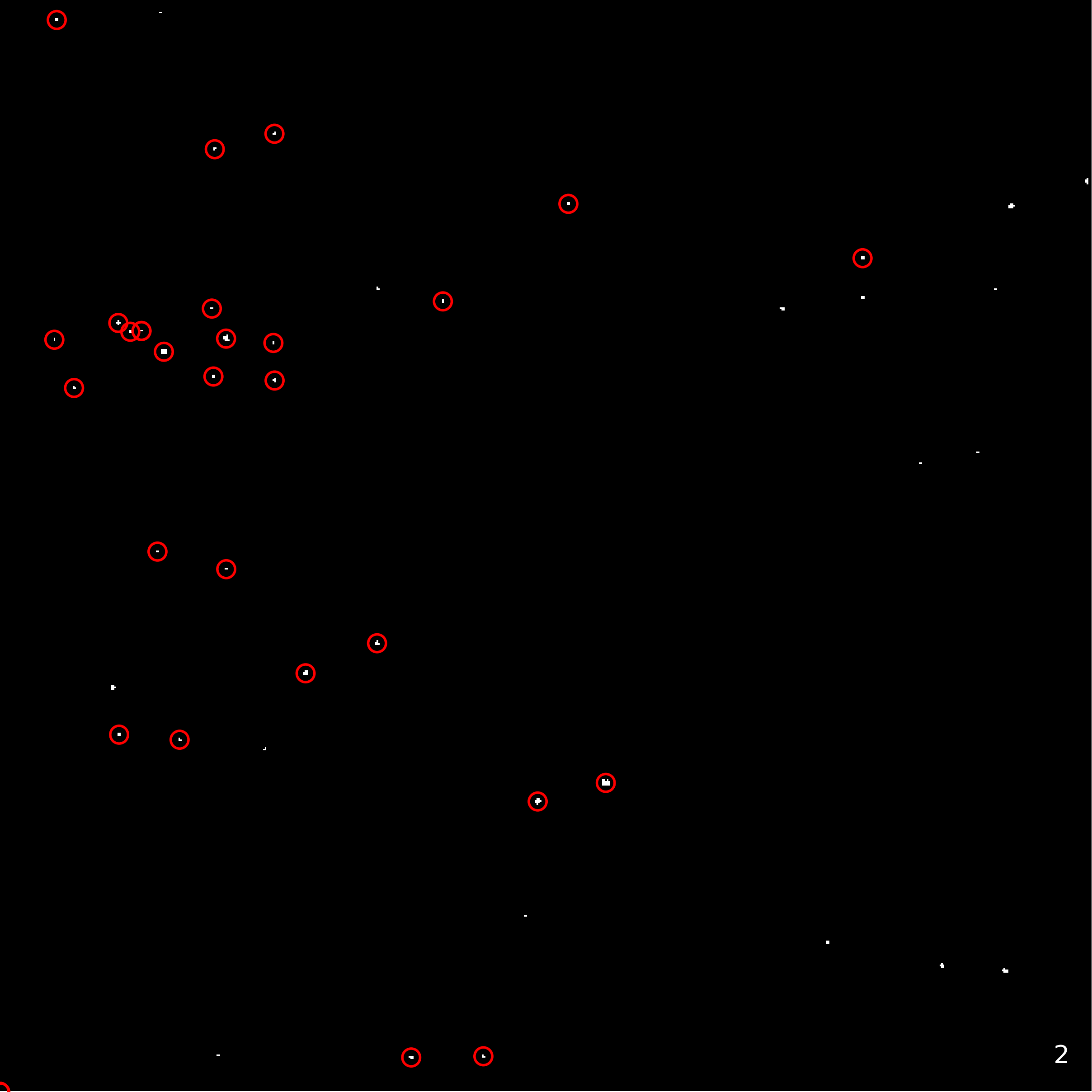

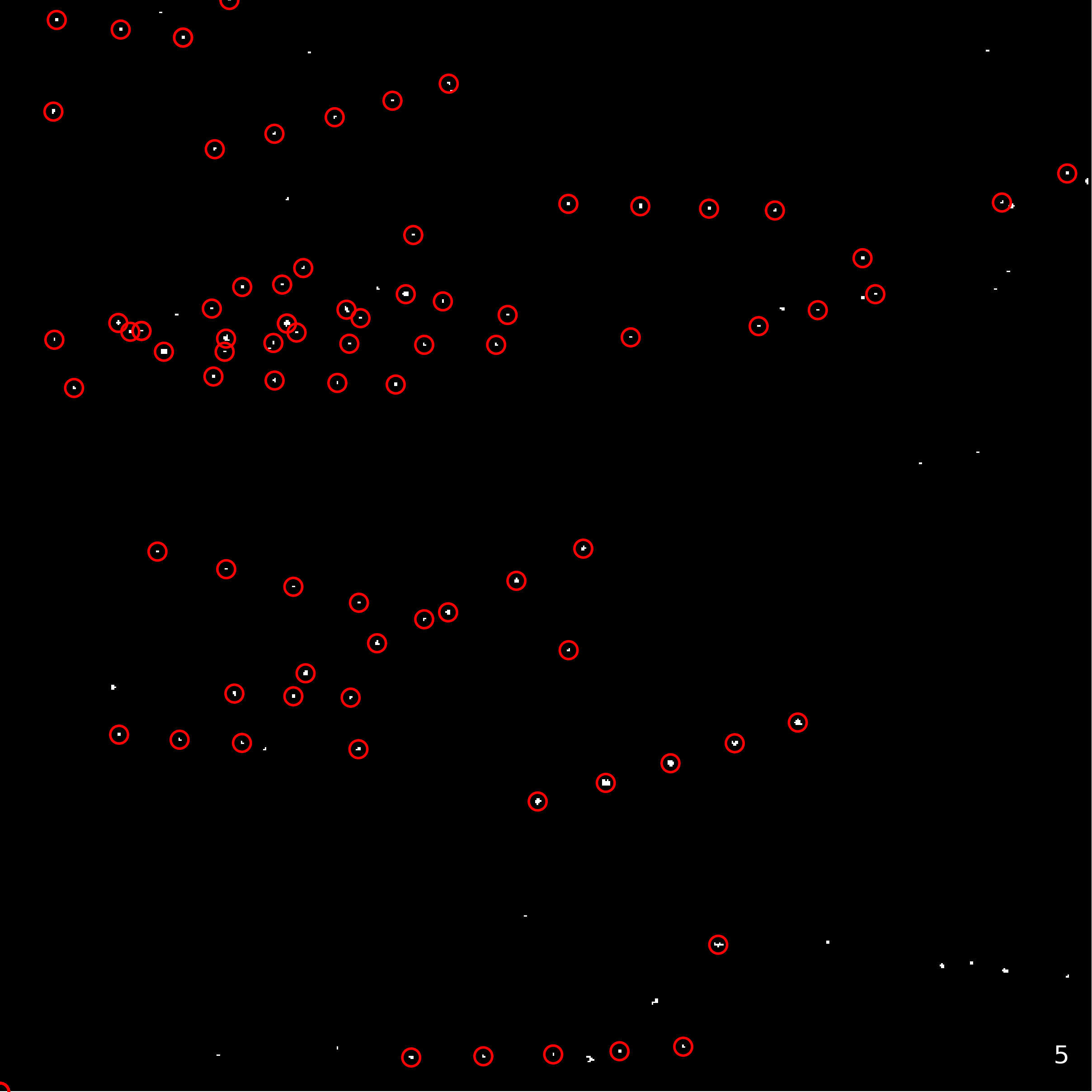

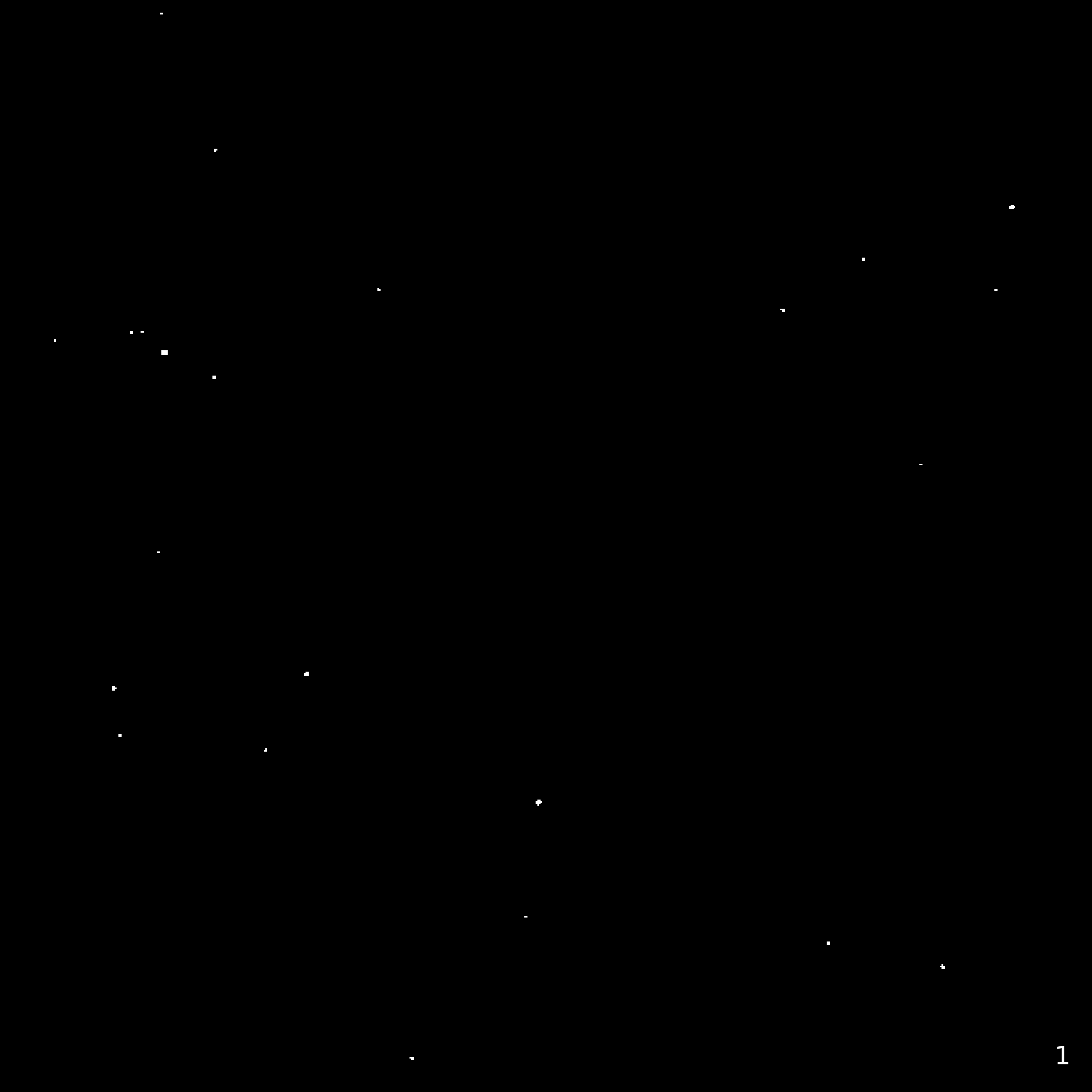

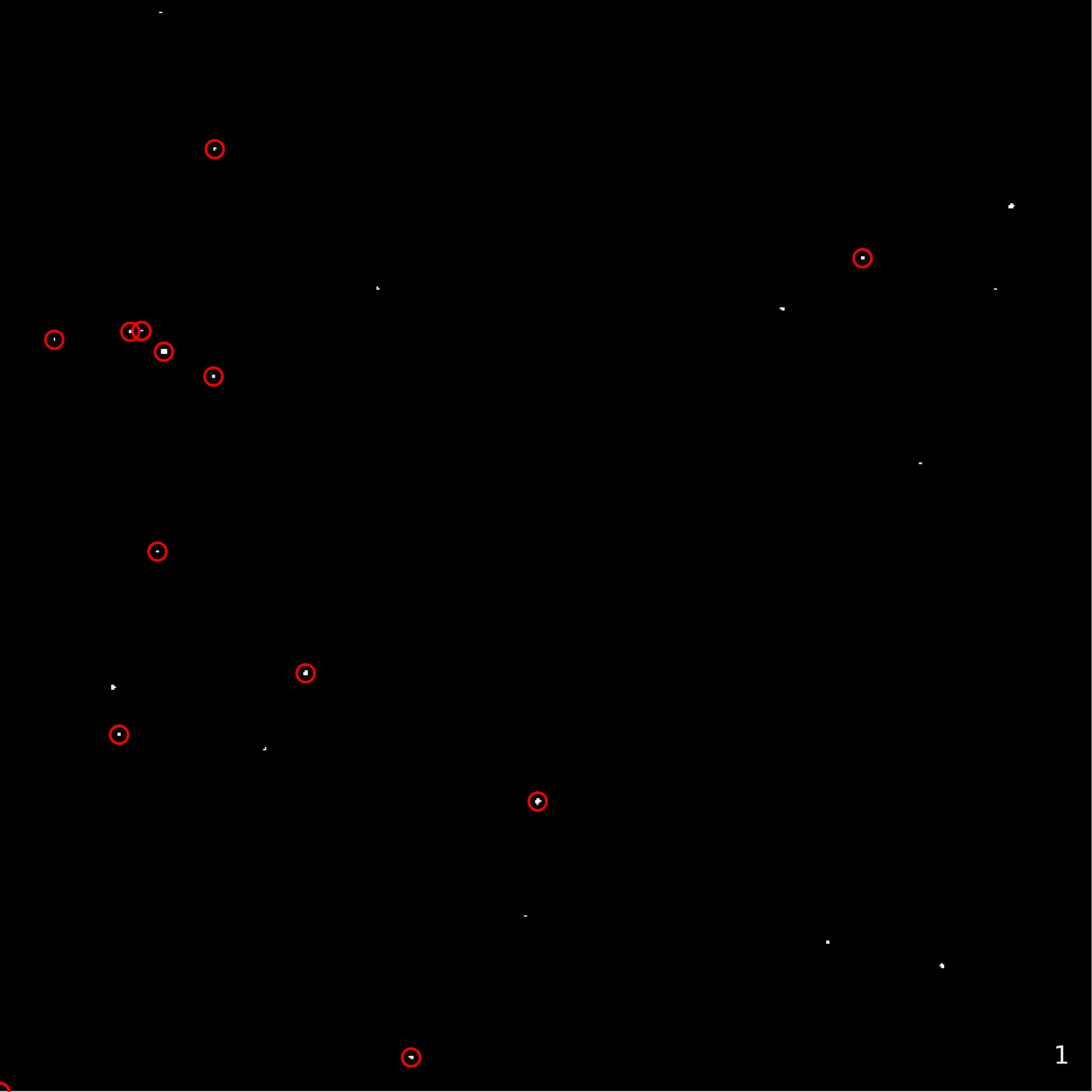

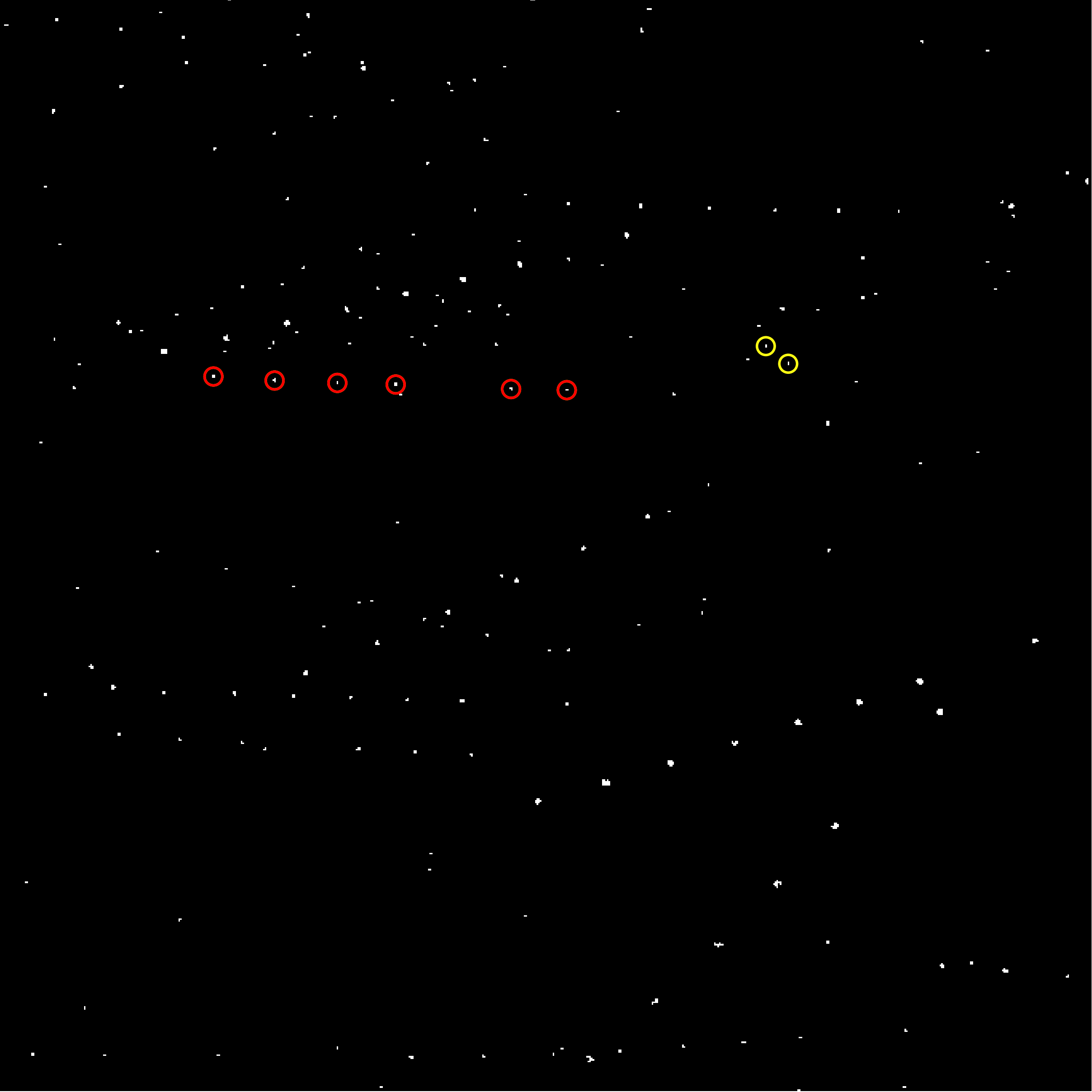

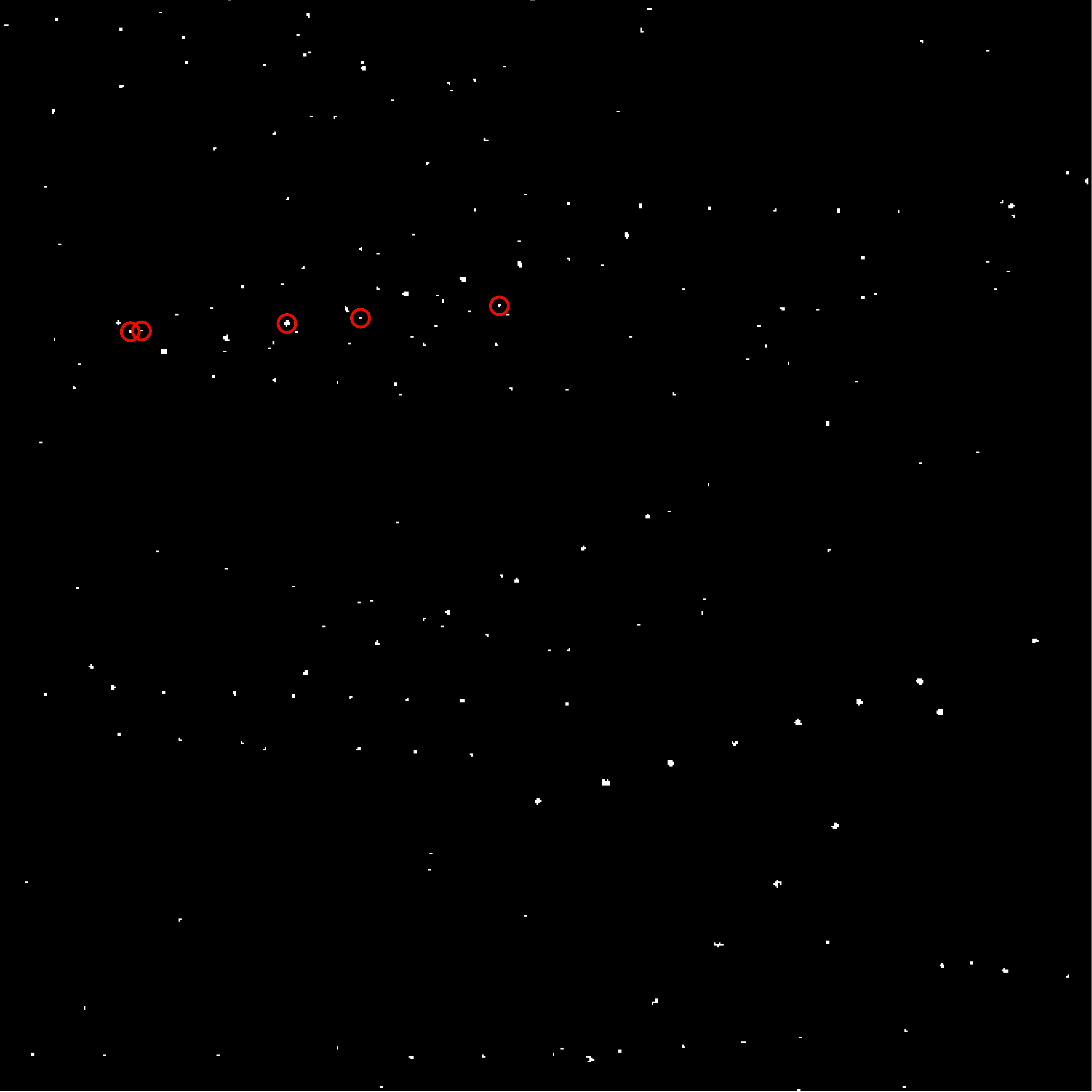

These first two image sets below show the results of image differencing in image set 1 and the final set of linked detections in image set 2. The difference images are thresholded at 3σ and singular pixel detections have been removed to limit the number of detections to keep this analysis tidy. I've talked about image differencing at length before, so I won't go into it any further here. You can see my previous work here or the process description for more details. The linking process has been substantially extended though, so I will outline the details of that process next.

1. 3σ Thresholded difference images with small (1px) detections removed to reduce detections to a manageable level. Derived from 8 3-frame median integrated 683x683 pixel TESS FFI cutouts. The FFIs are from TESS sector 3, camera 1, CCD 3. Note: the observation frames are equally spaced in time (24 hours) except between frames 7 and 8 which have a 48 hour interval. Click and hold the image for a composite view.

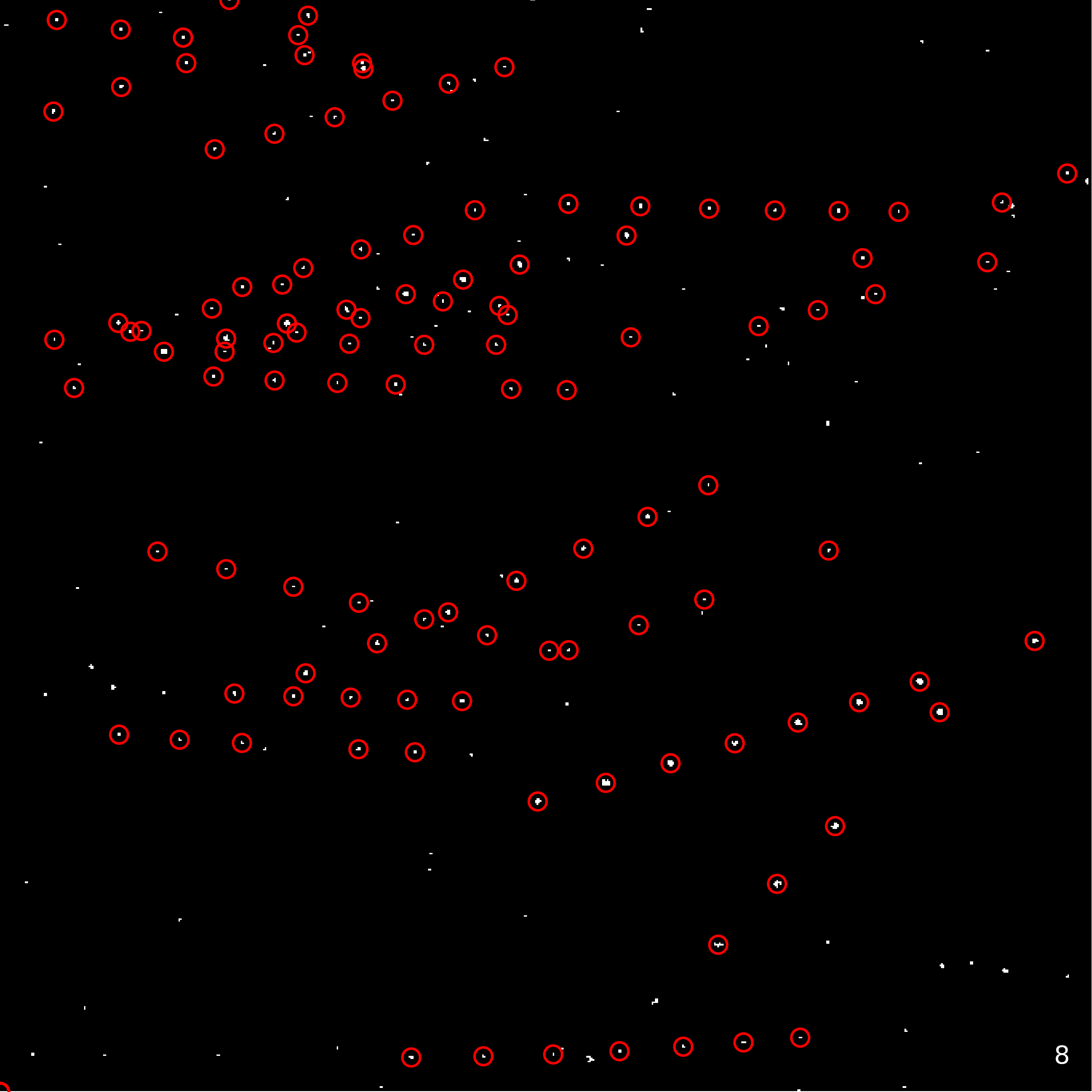

2. Final tracks for detections in image set 1 difference images after the 3 step linking process explained below. Click and hold the image for a composite view.

Linking: a 3 step process

First a couple of definitions. Linking is the process of connecting a detection at one observation time to detections at other observation times for the same physical object. If you look at the composite difference image in image set 1, your eye can see the linear tracks that represent individual objects across time. Linking is the process of algorithmically joining those. Linking detections creates tracks. Tracks are 3 or more detections that have been linked across time and correspond to the same object. In this analysis I accomplish linking via the following three steps:

- Create preliminary candidate tracks by clustering the velocity vectors of the detections with respect to one another.

- Using orbit determination software OpenOrb, attempt to solve all 3 detection subtrack combinations for each preliminary candidate track.

- Relink solved short arc subtracks with two overlapping detections to build up the longest track with subtracks that solve.

Step 1: I've described the technique I'm using to do preliminary linking and generate candidate tracks before, but in brief, I look for detections that are moving at similar velocities with respect to one another. Over short time spans, the arc traced by objects in orbit are relatively linear and thus the same object moves at a similar rate with respect to itself across observations.

Step 2: With preliminary candidate tracks in hand, we can filter non-physical tracks by testing whether an orbit can be calculated for the detections that make up the track. The best way I've found to do this to date is to attempt to solve all unique 3 detection combinations for a given preliminary track and then repeat this across all preliminary tracks. Let's call each of these 3 detection subcomponents of a track a subtrack. Now, say there are 35 separate candidate tracks from step 1 and that candidate track 1 is composed of 5 detections. The number of ways to choose 3 detections from a set of 5 is C(5,3)=10. So for this example I would try to solve 10 potential subtracks in this first candidate track. Then I would do the same thing for candidate tracks 2 through 35. In this way we determine which of the candidate tracks from preliminary linking correspond to (likely) real physical orbits.

Step 3: Now we have a set of zero or more subtracks that solve for each candidate track. Obviously, if there are no subtrack solutions we discard the candidate track. But for most candidate tracks there are numerous and overlapping subtracks that solve. To reassemble the final set of tracks in this study I relink subtracks that have two overlapping detections and do not span more than 3 frames (a frame being an observation time here). That first part is probably easy to understand: if you have two subtracks that have 2 out of 3 detections in common and both solve with OD they are likely both subset observations of the same object. The short arc part was something I implemented to resolve the issue of, for example, a subtrack consisting of detections in frames 1,2 and 8. It might solve with OD, but that lengthy span between frame 2 and 8 tends to indicate a spurious solution. So I require subtracks to have relatively short arcs in order to be relinkable into final tracks. A detection in frame 8 can still be relinked into the track if it overlaps with solved subtrack detections in frames 6 and 7 for example.

A closer look at a few individual tracks

Image set 2 shows 24 final tracks, but it's a little hard to see what's going on in an animation consisting of all of the linked detections at once. Below I'll highlight four tracks that demonstrate a couple of common ways that detections resolve into final tracks. The images below are a composite of the 8 difference image frames from image set 1 with one track highlighted per image.

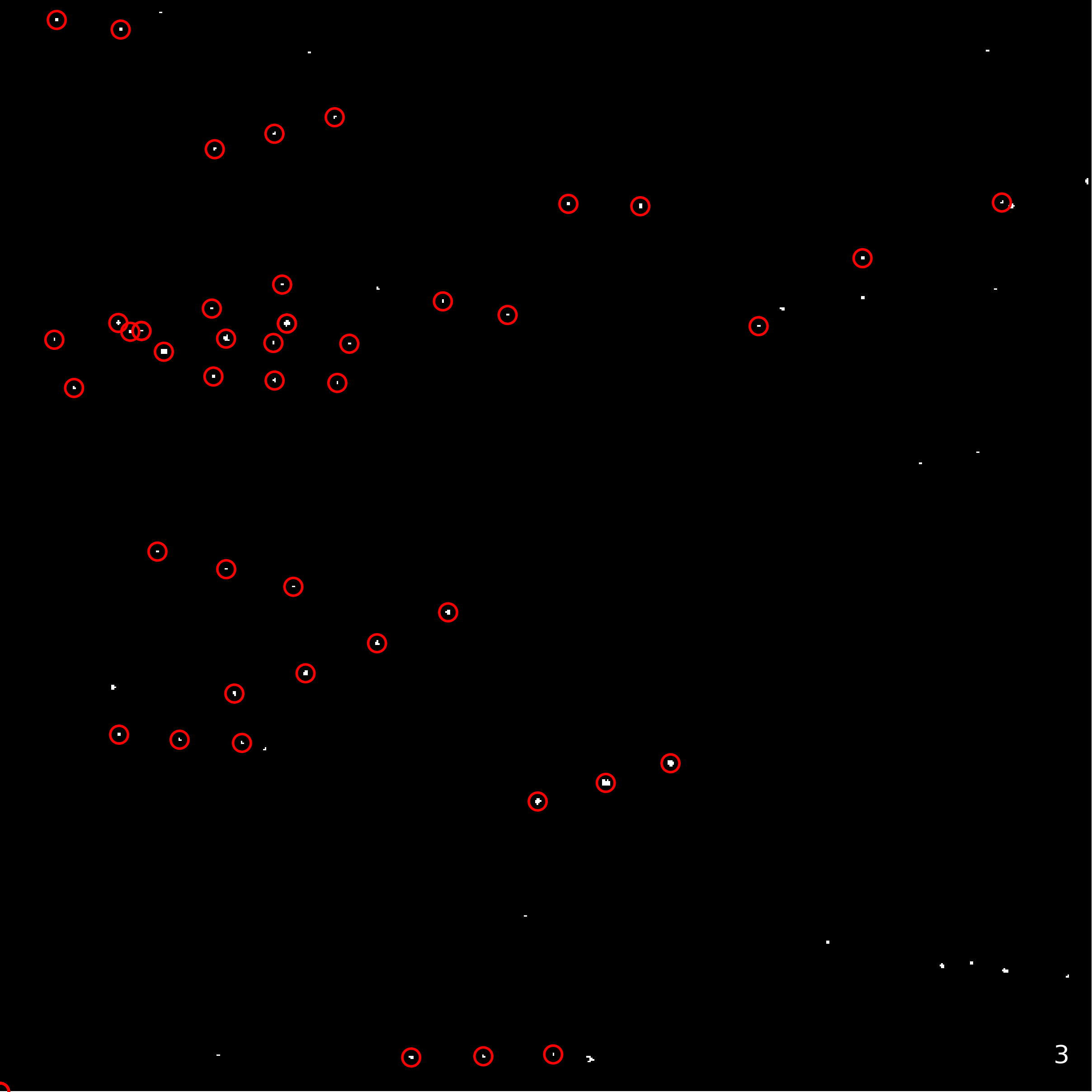

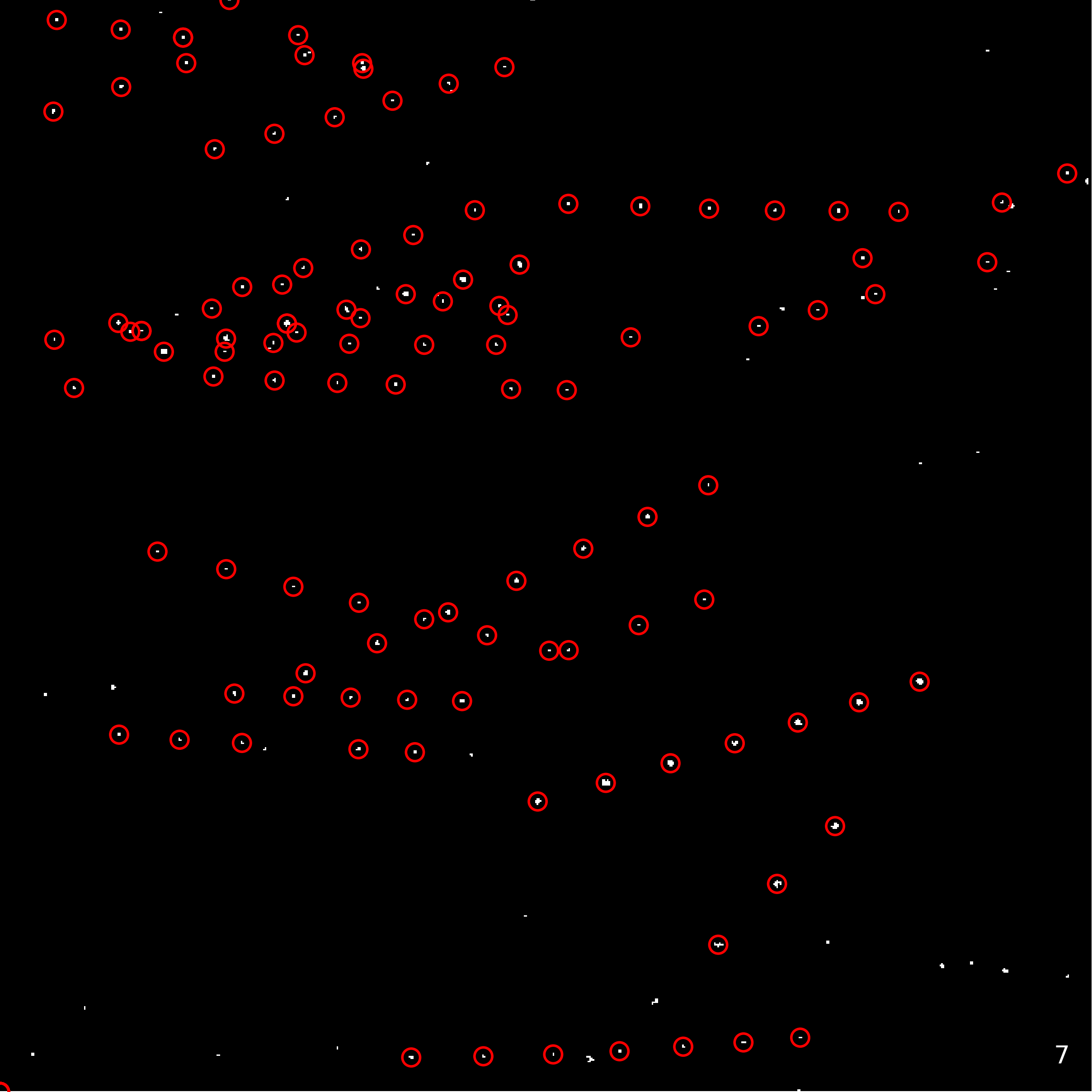

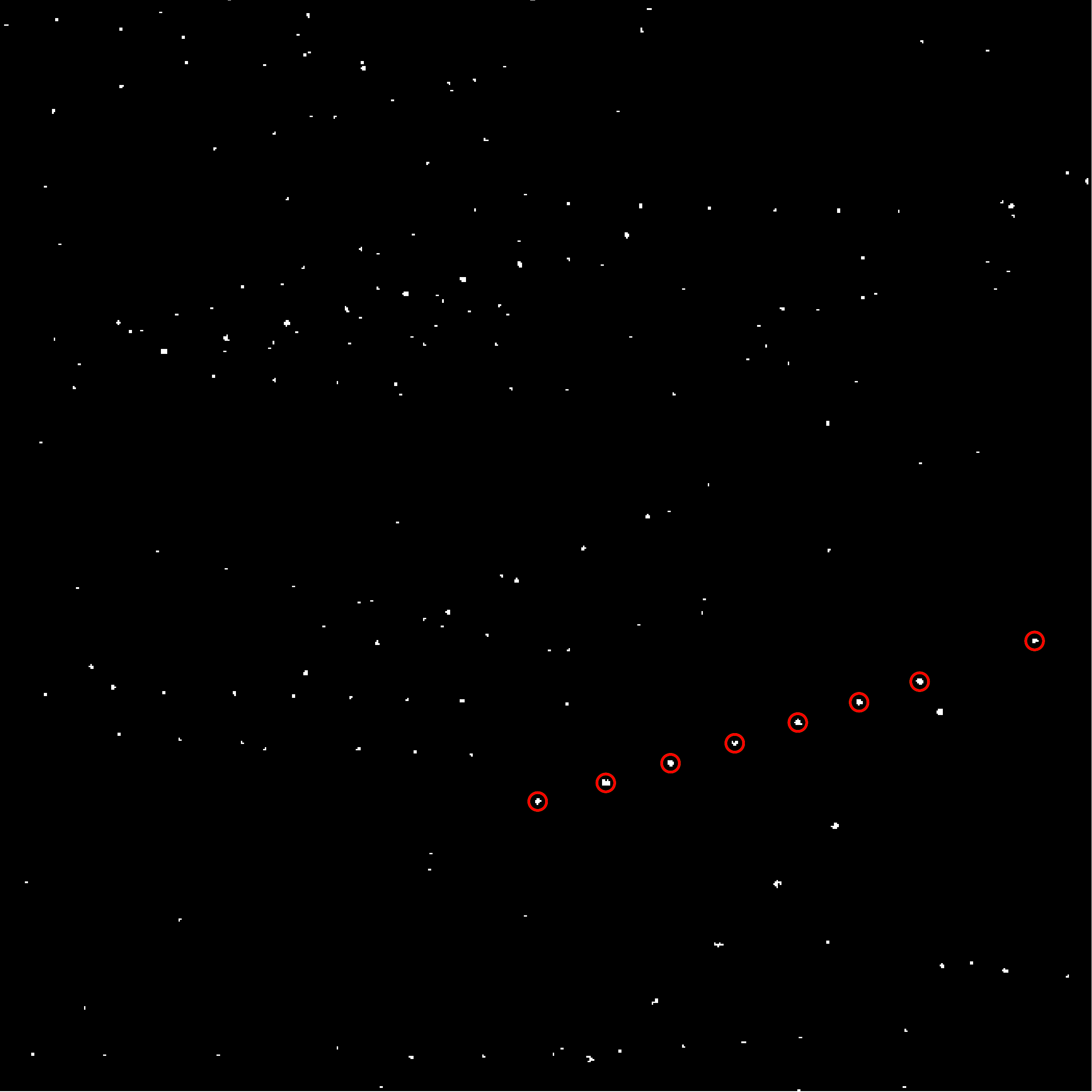

3. Feodosia: This is the 3 step linking process working smoothly. All 8 detections were discovered by preliminary linking and the subtracks that solve include all 8 detections which allow for all 8 detections to be relinked into one object track.

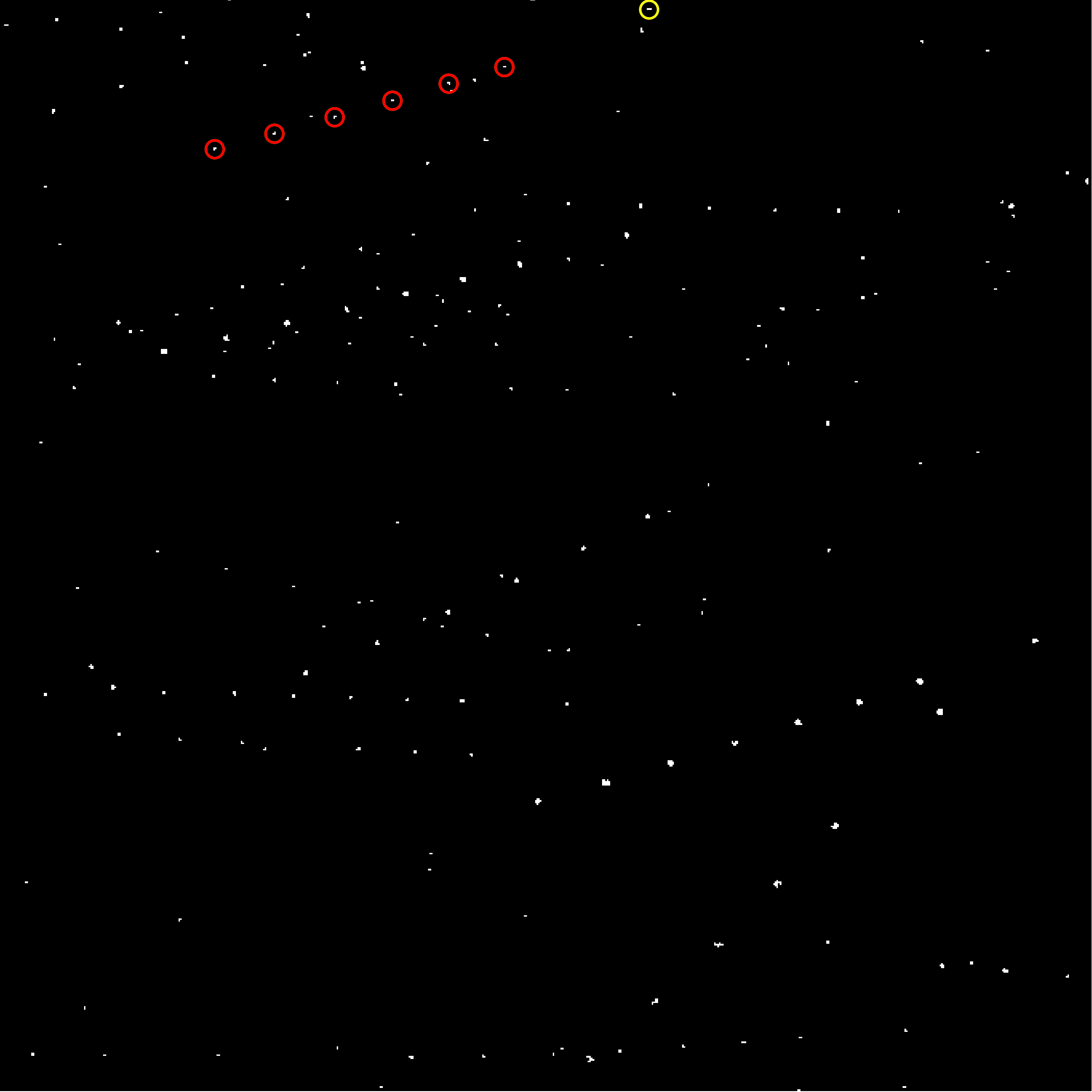

4. (1999 JN40): This is a common issue for linking to resolve. Each circle here represents a preliminary linked detection that has been solved for some subtrack combination. However, the yellow circle was discarded by the short arc constraint of relinking. The issue is that OD solves for the first two detections on the left plus the yellow detection. There's too long of a span between those detections and some spurious solutions can come out of OD over those longer arcs. Relinking correctly discards this yellow detection because there are no OD solutions between later detections (further right) and the yellow detection.

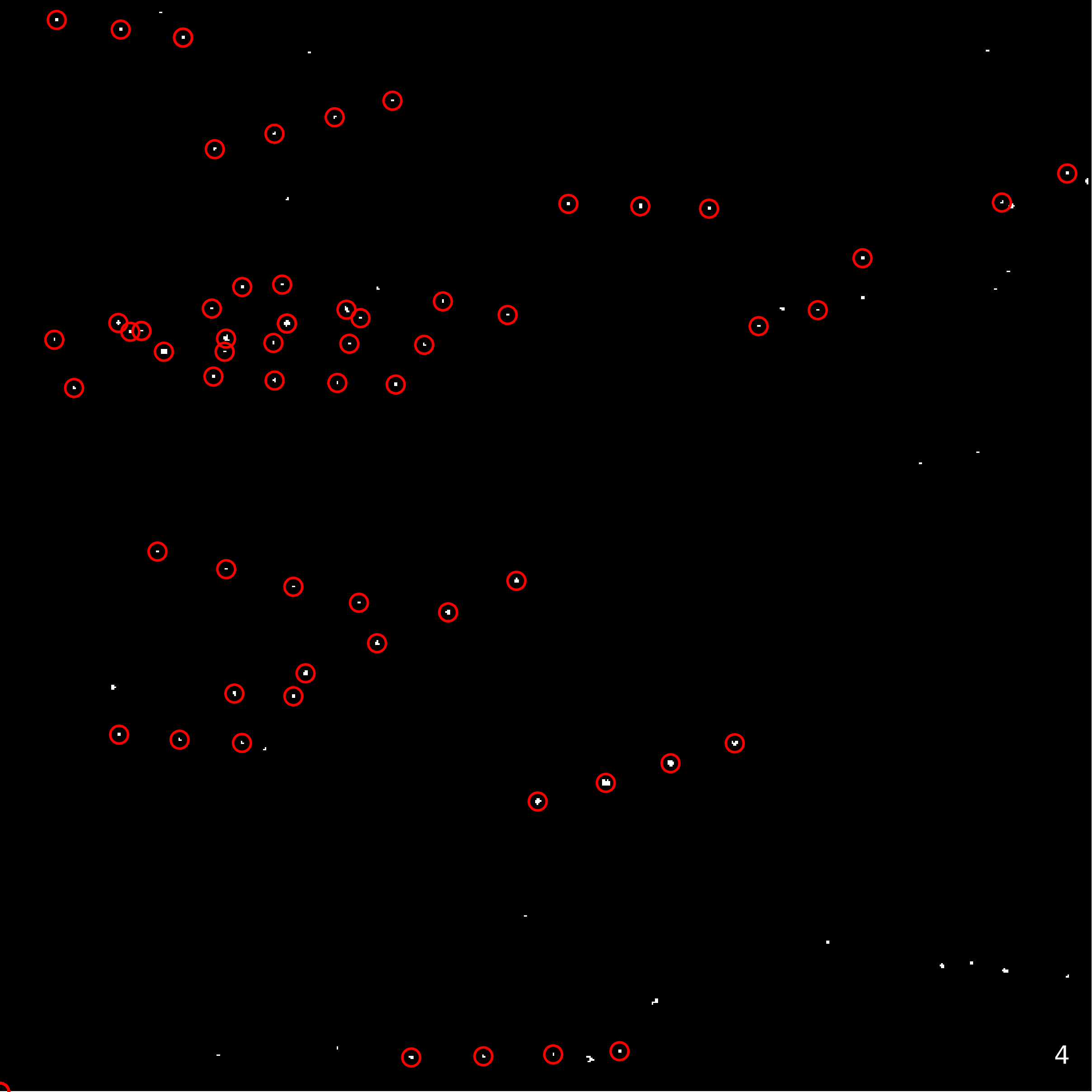

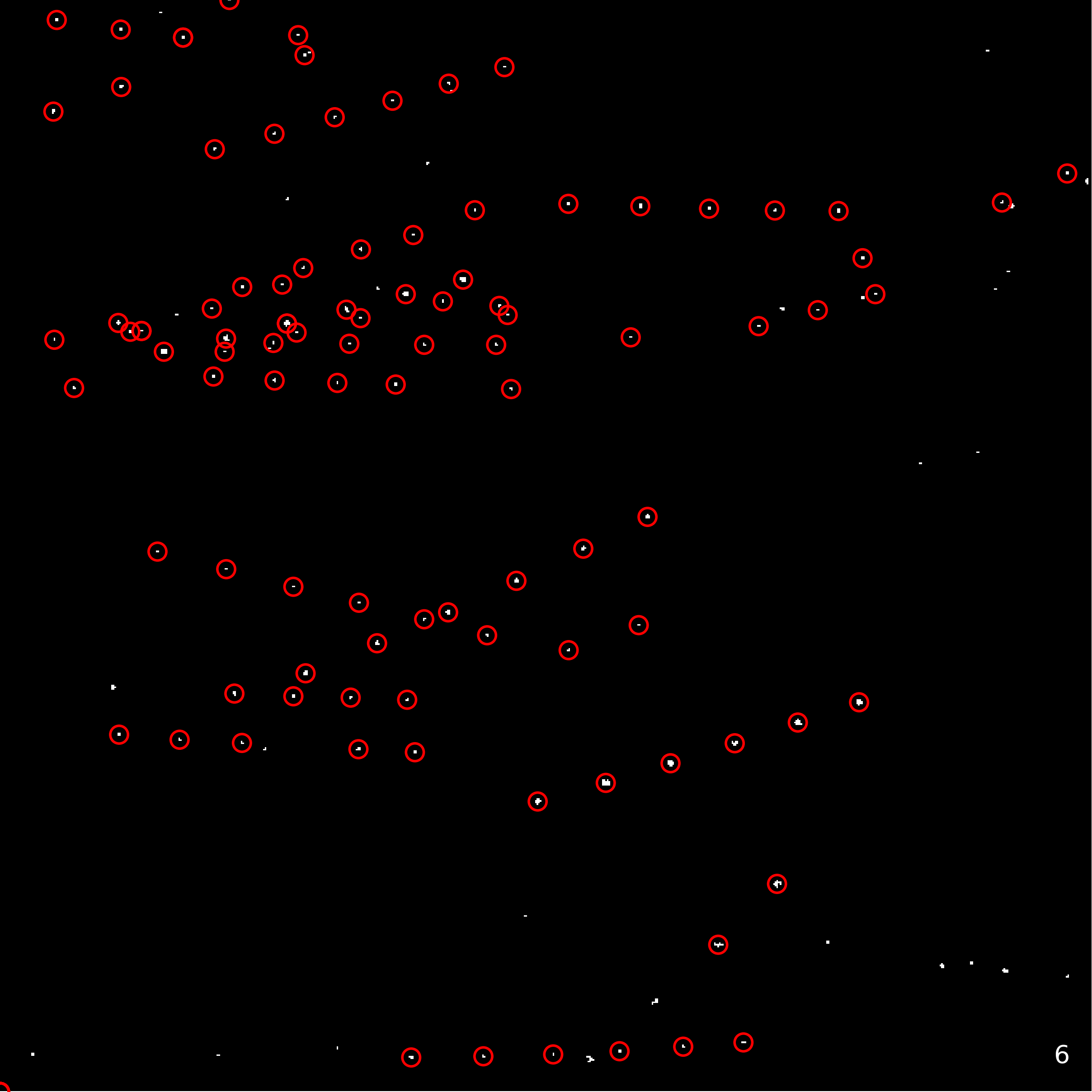

5. Riccati: This is a slightly more extreme example of the same issue present in image set 4. The two preliminary linked detections furthest to the right only solve for detections furthest to the left. As such they are correctly discarded by the short arc constraint of relinking.

6. N/A: This is a bad track that I discovered while writing up this analysis. My relinking algorithm assembles final tracks from overlapping solved subtracks. In this instance I failed to reject overlapping solved subtracks with 3rd detections both in the same frame (observation time). One object can't be in the same place at two different times. This should be easy to fix.

Results

The final set of MPC formatted observations for the relinked tracks detected above (sans the bad track) are in the two files linked below. The first file (T313.mpc) contains the tracks alone and can be used directly as input to OpenOrb. The second file (T313v.mpc) contains the additional geocentric position vector for the TESS observatory at the time of each detection observation time as required by the Minor Planet Center for data submission when using a space-based observatory.

Using JPL's ISPY tool I was able to match the 24 tracks to the physical objects they correspond to. I then use Horizons to look up the precise position of these known objects at the subframe (a single observation frame from the set of frames that eventually become difference images) observation time that has the maximum signal at the detection location of the difference image. 23/24 objects were identifiable with ISPY. The one unknown object was the invalid track I showed in image set 6. Using TESS' WCS for each individual subframe I calculated the position for each detection in each track and found a median deviation of 7.96 arcseconds across all detections compared to Horizon positions. Given TESS' 21 arcsecond pixel scale, I think this is a pretty good result. However, I should investigate the theoretical limits of precision for a given pixel scale. Maybe I'm falling short of what's possible.

Process notes:

- Linking: The 3 step linking process outlined above was the main process enhancement in this study. I said a lot about it already, but one other interesting component of the process is that it generates a count of how many times a given detection links and then solves against other detections. One or more detections are often 'keystone' detections for a track in that they solve with respect to other detections in the track more often than other detections do. This might be useful information to incorporate at some point.

- Bilinear interpolation for centroid subpixel positions: I'm calculating a centroid for detections in the difference images that yields a subpixel value (i.e: X:200.5, Y:300.3). That's the position of the detection. To find the most precise time measurement for that detection position I'm looping over the de-backgrounded, normalized subframes that the difference image is built from and selecting the observation time that has the maximum signal at that centroid location. I've been doing that for a couple of these studies now. The new part here is that I'm not finding the maximum at the nearest integer pixel location but am using bilinear interpolation to find the maximum at a subpixel location.

Ideas and next steps:

- Long arc filtering before OD: I can speed up the linking process runtime by rejecting long arc candidate links in the preliminary velocity vector clustering step, but I'm wondering if I'm going to want to see those links regardless of whether they get filtered later.

- Relinking fix: This should be an easy tweak. I just need to make sure that relinking doesn't relink two subtracks that have two overlapping detections with the third detections both occurring at the same observation time.

- Improve magnitude estimate: My apparent magnitude estimate is a bit noisy. I think I can improve this fairly easily. I'm using ISPY's magnitude data but I'm using it for the middle subframe observation time rather than the max signal observation time so the detection time is slightly off with respect to the ISPY magnitude data I'm using to calculate my detection magnitude.

- Maybe I should make a tool to pull ISPY data: Related to the above, there's no Python tool for retrieving ISPY data from JPL as far as I can tell. Perhaps I should make something simple that pulls data into a Panda's dataframe comparable to the Horizon's lookup tool.

- Is 7.96 arcseconds precision good enough for the MPC: If I want to submit data to the MPC, will this precision be enough? They're looking for sub 3" but I'm not sure that's achievable with TESS' fat pixels.

- Do I need multiple observations per night for MPC submission and is that achievable for slow moving objects: Another MPC requirement is that you submit multiple observations per night for a given detection to build a tracklet. With slow moving objects and TESS' fat pixels, objects are often still in the same pixel over that short of a span of time. I assume that you just scale this period up and build tracklets out of observations on sequential nights, but I need to check.

- Submit relinked tracks or subtracks that solve Another MPC related question. Relinking builds up tracks from subtracks that solve, but not all relinked tracks solve with OD. Is it better to submit longer tracks that I know are observations of the same object or to submit shorter tracks that actually solve with OD?

Discussion

As I mentioned above, there are certainly more objects within this field than the 23 that I've linked. But by removing small objects from the difference images I've intentionally paired down the set of detections to demonstrate linking and object coordinate generation. In future studies I will likely be looking at dimmer and slower moving objects that necessitate the integration of more subframes and thus tend to filter out the more numerous fast moving objects; I won't need to remove detections in order to deal with fewer data points. That's probably what I'll work on next. Can I find a track in a TESS FFI that doesn't correspond to a known object? Probably not, but it's fun to look. One question I'm pondering right now is where to look. Should I just pick a TESS sector at random and see what my nMOPS tool can see? Or should I look at a sector that TESS is observing that isn't observed by other observatories - a look where others aren't looking approach. That's probably the ideal strategy, but I'll need to understand a lot more about current and previous survey footprints to know which regions have been observed before.

Published: 8/1/2020